|

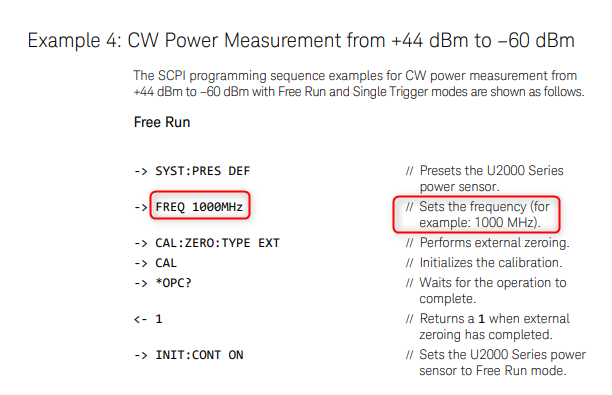

This is a list of best practices I try to remember for myself when I do my work. I don’t always succeed in doing everything on the list, but this is what I strive to do. I realize some of these things might be part of a process you have to follow as a test engineer and if so that’s great. However, there are lots of times when just your own best practices are all you will have to go on. In those times having some discipline for yourself can save a lot of pain down the road. 1. Don't trust a measurement I've been tricked by measurements pleanty of times. When writing a new test with an unfamiliar instrument, there are a lot of things that can go wrong. Before you send a SCPI :meas and :fetch and call it good, there are many factors to consider. First, I try to understand how the instrument I am using works. It pays off to carefully read the manual. I don’t mean sit down and read the whole manual, but I’ll start with an example that is close to what I want to do and try to understand how it works. I might try removing some of the commands and see what happens. I often find I need to look up the individual commands and see what the description is. Here’s an example. I’ve written before about how I used a Keysight U2001A power sensor on a project a while ago and I remember how when I first looked at the examples they all have this frequency command in there. Like this: You can see it just says FREQ 1000MHz and the comment doesn’t add much explanation. At first you might think that you are setting the instrument to measure the frequency of interest. Well, remember this is a power sensor and it inherently is a broadband measurement, and there is no frequency selectivity. If you dig into that command you’ll learn that this is a command to query previously stored frequency specific measurement offsets from memory on-board the instrument. Finally, when you think you have your measurement correct, find a second way to verify it like with a different instrument.

2. Write everything down This sounds like a pain and it’s easy to skip, but I find it’s more efficient in the long run to create a log of my work and debugging. I like to use OneNote, but I suppose anything would work. Start with a blank page or a page is dedicated to this task. Then, as you work through a problem take pictures of any important physical setups and write down settings or commands. Also record measurements and results that you get. This seems to help me from getting stuck in the short term and it's often useful to show others what I have tried to get something to work or just remember what I did. Another benefit is you can often reuse pictures and step-by-step descriptions to write really good documentation. 3. Get out and talk to people Not to put a label on engineers here, but it may not be in every engineer’s nature to go out and talk to people. In the course of a project, I try to get out of my cube and talk to other test engineers, talk to manufacturing, quality and design engineers. People generally like to help and they can often save you a ton of time. Weather it’s for a technical problem or a project management type problem, the more you can get involved the better. It also helps to learn who knows what, because you don’t have to know everything yourself, but you need to know who knows everything so you can ask them. Lots of communication! 4. Study the R&R I’m not crazy about using formal Gage R&R to evaluate test systems and electrical measurements, but it’s still worth doing to get an idea of how repeatable and reproducible your measurements are. I usually try to do a simple study where the test systems function as operators and test maybe 5 or 10 DUTs across three test systems. If you happen to have access to Minitab, it has a good tool called Gage Run Chart. It will give you a good visual evaluation of repeatability and reproducibility without all the percent of variation stuff that comes in the full R&R. All of the statistics produced by the full R&R can often just be misleading. Of course, in some industries there is a strict validation process for tests and test systems that will dictate exactly what to do. 5. Show my system to the users Put another way, work with the customer. I want to show my software and fixture operator interfaces to the people who are going to be using them as early as I can. It might not be fun, but it’s easier to get the feedback earlier when it’s still easy to change because they are going to make me change it one way or another. Lots of communication! 6. Try to break it Everyone has heard this when developing systems and software. Try to validate the system and perform all the tasks an operator would see. Make sure the test system can catch the defects it is intended it to. Try to think of ways the mechanical fixtures could be confusing or cause damage to the DUT. There is the Lean concept of Poke Yoke, that is making things mistake proof, I want to always keep that in mind. Think about how can a test system be abused or misused just to get the test to pass. 7. Be careful of stuff you don't control Oh man, I’ve been scorched by this before... Be careful if you have to get support from other parts of your company to do your testing. Especially if this support isn’t really what they would consider to be their job (even though it totally is). For example, if you are doing a test and you need the firmware department to build you a special firmware to perform your testing, this can be dangerous because it really only helps you and gives them extra work. I don’t mean this to sound like you can’t trust anyone type of a thing or everyone is against me type of thing, but it comes down to a lot of communication and early planning if support from other groups is required for success. Lots of communication! 8. Be patient and try to do it right the first time There is often project pressure and it can feel like people seem to forget that products need to be tested and manufactured. Try to resist the urge to, “just get something out there” and fix it later. It may seem like there is a lot of pressure to get done, but the pressure will get worse when the test isn’t working in manufacturing. Often the problem is just communicating with the team that the test isn’t ready and people will be willing to work with situation. This one can be really hard, but a test group the slaps everything together will end up in a huge hole after a few years of this. 9. Use high quality equipment I have this joke that every time I tell my manager how much something will cost they say “merciful heavens!” and then faint. Seriously though, I do think it always pays off to buy high quality equipment from real instrumentation companies. In general, avoid using USB instruments and devices in your test system. This is just my opinion, but I’ve had issues with problematic USB communications. At the very least use USB instruments from reputable vendors like Keysight and NI. Try to use a PXI chassis or even though it’s old, GPIB. Use an industrial computer with a consistent operating system. I’ve seen lots of times where test systems that are supposed to be the same are running on random PCs with different versions of Windows, 32-bit, 64-bit etc. I sometimes feel like I’m the only one who cares about this, but it’s important to get your computers from a real industrial computer supplier whose business it is to ensure a consistent setup. 10. Get stuff done I don’t know if this will make sense, but sometimes it’s easier to just wait for things to sort of figure themselves out, but resist this temptation, really try to get things moving and done. When I feel stuck, again the solution often seems to be lots of communication!

1 Comment

EDMUNDO

12/28/2018 08:09:39 am

Great post!

Reply

Your comment will be posted after it is approved.

Leave a Reply. |

Archives

December 2022

Categories

All

|

RSS Feed

RSS Feed