|

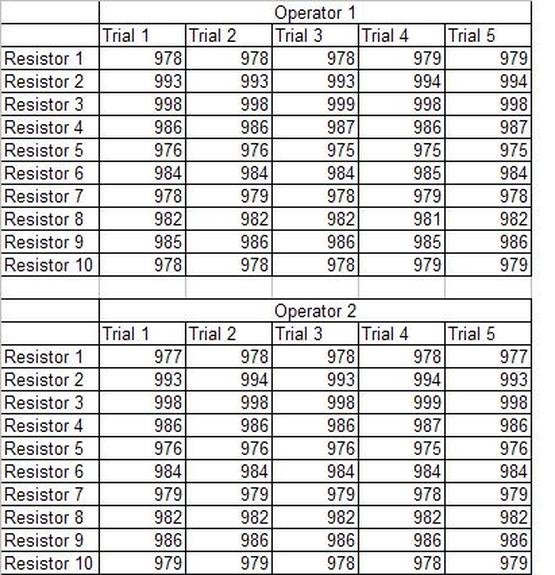

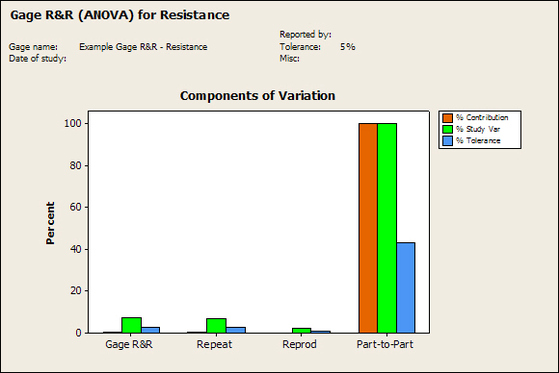

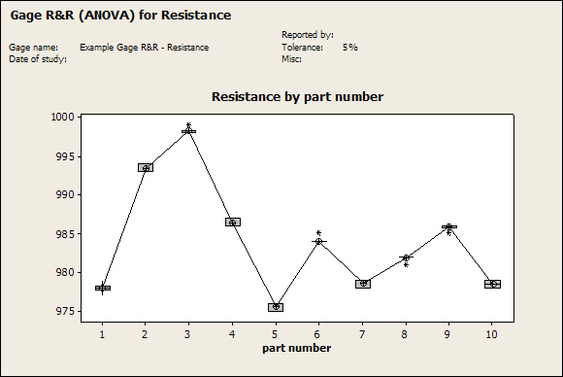

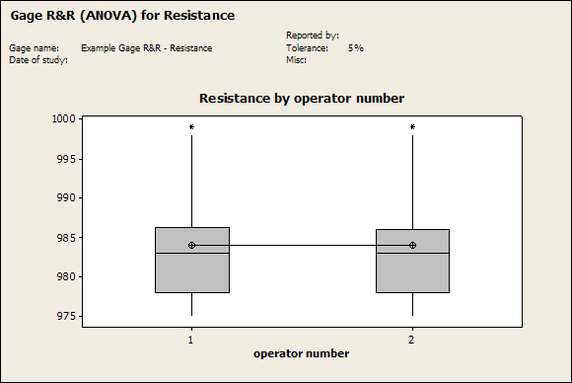

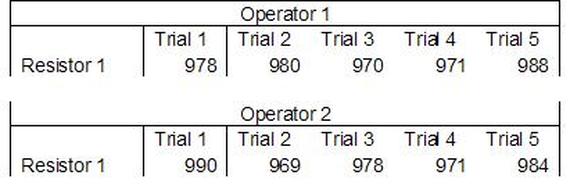

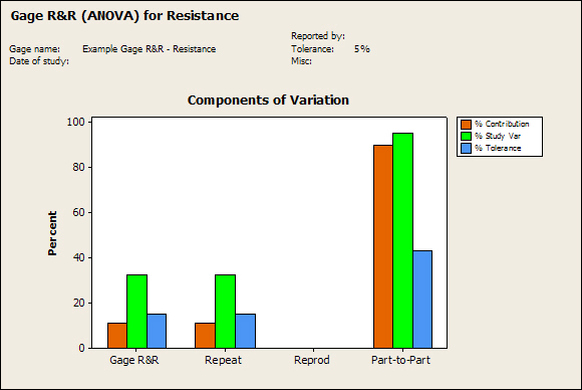

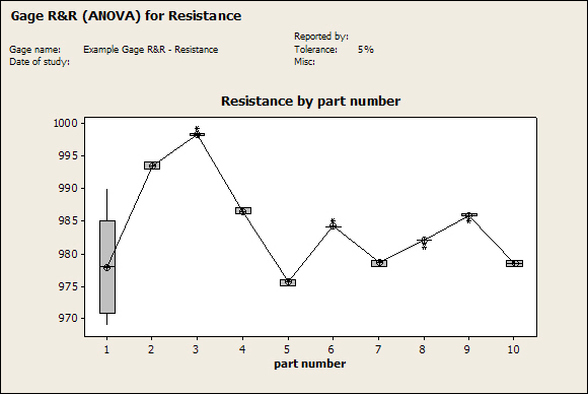

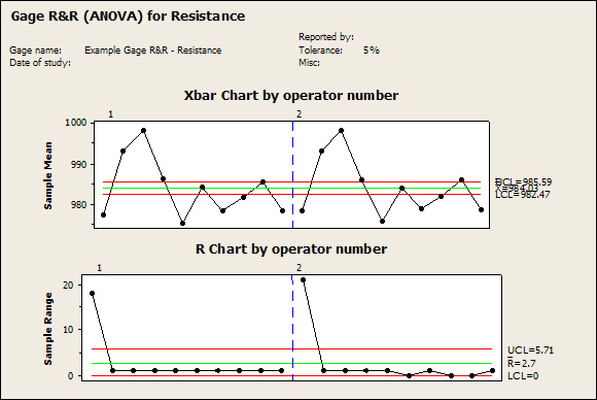

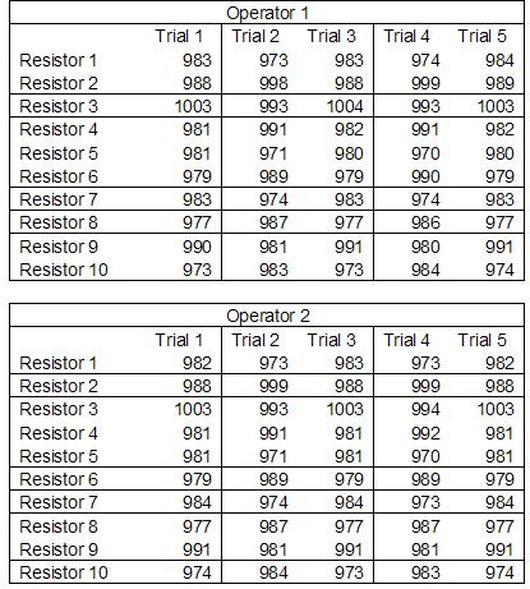

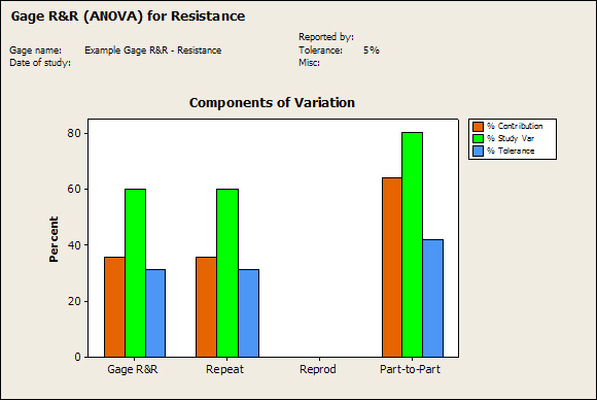

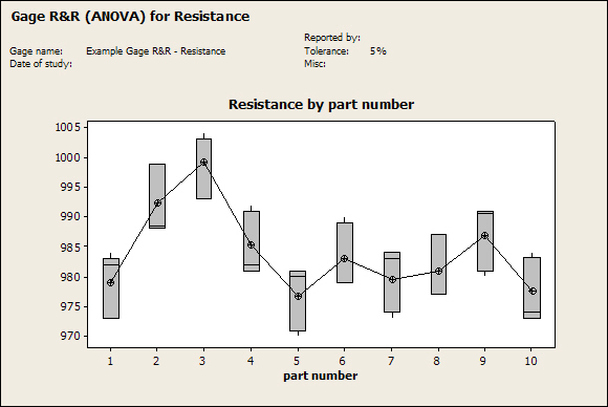

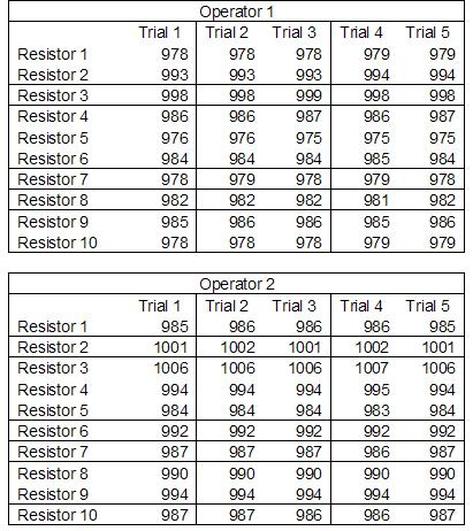

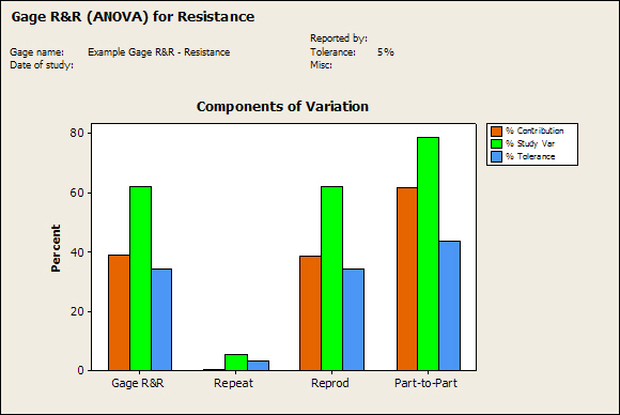

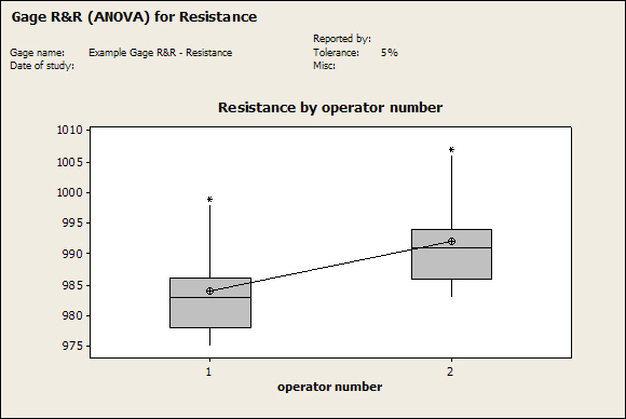

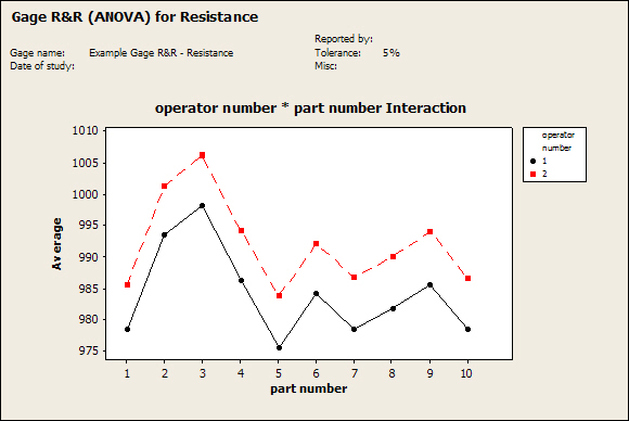

Having a good understanding of statistics is important for a test engineer. There is a lot that can be learned about your tests by examining the results. Some of the important tools are taken from the Six Sigma quality world, but there is so much going on there I could never cover it all. Also, many of the Six Sigma techniques are more focused on manufacturing and preventing waste (lean manufacturing) and achieving high yields. While these issues are of concern to a test engineer, you have to balance the desire for yield with the concern for quality. One statistical topic of particular importance is Gage Repeatability and Reproducibility studies. Also going by the name of Measurement System Analysis, performing a gage study is necessary to determine if and where any sources of variation in a test system are coming from. Sources of Variation There are three main sources of variation Operators When reading about gage R&R operators tend to be discussed as the actual people who are performing the measurements, but they can also be thought of as combination of equipment. If you wanted to look for variation problems with test fixtures or test systems, operators could be thought of as follows: Operator 1 = Test System 1 + Fixture 1 Operator 2 = Test System 1 + Fixture 2 Operator 3 = Test System 2 + Fixture 1 Operator 4 = Test System 2 + Fixture 2 When operators are people, comparing them allows you to identify poor training or a setup that is difficult to reproduce. Measurement System/Method What is being considered here, is the instrument taking a measurement or the test/measurement method. The word “gage,” is like a gage on a meter that shows a measurement, it’s just a substitute for instrument. Parts Ideally, parts should be the main source of variation reveled by the Gage study. When designing a gage study, ideally, all of the parts chosen should represent the entire population of parts by spanning the full range of tolerances. This is not always possible as the availability of parts may be limited or extremes in tolerance may not be available. This is important to see how the system performs on all of the parts that may have to measure. If an instrument or test system can accurately measure at the low range of the parts tolerance, but not towards the upper range that is a linearity problem. Operator by Part There is actually a fourth source, but it’s a combination of the others. Once operator and part variability have been considered, operators can be evaluated on a per part basis. This may reveal that on a particular part all of the operators are unable to measure it consistently. While this would also show up on the part variation, it is more difficult to tell in that case if the variation is a gage repeatability problem or an operator problem (due to that part). Other than part-to-part all of the sources of variation can be classified as problems due to repeatability or reproducibility. Repeatability Repeatability is defined as variation due to instrument error. Of the sources of variation listed above Measurement System/Method is assessed by examining the repeatability. What is done is a part is measured several times without changing any of the setup or operators. That data is then used to quantify the variation in a measurement system resulting from an instrument or test method. Reproducibility Reproducibility is defined as the variation due to external sources such as operators and environmental conditions. Of the sources of variation listed above Operators and Operators by Part are assessed by examining the reproducibility. Here, several operators will measure the same part repeatedly. This will reveal variability due to the operators. Example R&R Studies In order to show some of the features and problems that can be found, I’ll do an example of a simple R&R study and alter the data to show some features. Setup I’m going to measure ten 1k ohm resistors five times each, with two operators, and analyze the results with Minitab statistical software. In an ideal gage the parts should represent the complete population of parts, so if I use 1k 5% resistors I should have some in my experiment that measure at the tolerance extremes (950 ohm to 1050 ohm). Experiment 1: Measured Data The first experiment was to gather the data and analyze the results. I used two Fluke 189 DMMs to measure the resistance and act as the two operators. Figure 1 shows the data. Figure 1. Original measured resistance data Figure 1 shows that I could not find a representative resistor population of the tolerance. As stated, the tolerance is 5% giving a range of resistances of 950 to 1050. However, none of the resistors in this population were even at 1k. This isn’t ideal but I wanted to use real data for the first experiment. Figure 2 shows the Minitab result of the crossed ANOVA gage study. Figure 2. Gage R&R results. Figure 2 shows that the vast majority of the variation found was due to part to part variation. This is what we want to see when evaluating our test system. Figure 3 is another plot from this same experiment. It also shows the variation due to the parts with a connected box plot. Figure 3. Connected box plot by part number. Figure 3 shows a connected box plot where a box plot shows the following. Outliers are shown as a star (*). The upper whisker extends to the maximum data point within 1.5 box heights from the top of the box. The box itself shows, the median, the first and third quartile. The first quartile shows where 25% of the data is less than or equal to the bottom line. The median is where 50% of the data are less than or equal to the center line. The third quartile is where 75% of the data are less than or equal to the top line. The lower whisker extends to 1.5 box heights below the bottom of the box. The connection line shows the averages. Figure 4 shows the connected box plot by operator. Figure 4. Connected box plot by operator number. In Figure 4 it you can see that it is showing an outlier on the high side. This is really illustrating the poor population I have for a 1k resistor since it is flagging a value below 1k as an outlier. We can also see that the reproducibility was very good from this plot, as both operators look about identical. Experiment 2: Problem with part 1 For this experiment I have altered the data for part 1 to make it look like it had some sort of repeatability issue. Figure 5 shows the new part 1 data. Figure 5. Altered part 1 data. Figure 6 shows the ANOVA gage study result with altered part 1 data. Figure 6. ANOVA gage study with altered part 1 data Figure 6 shows that there is now a more significant portion of variation due to repeatability, but we have to look at some of the other plots to understand what is really happening. Figure 7. shows the connected box plot by part number. Figure 7. connected box plot by part number with altered part number 1 data So, now we can see from Figure 7 that there was something wrong with the repeatability of part 1. With this new data we can conclude that there is probably something going on with part 1 since it cannot be measured accurately repeatedly. We should also check that this problem was not due to just one of the operators and Figure 7 wouldn’t necessarily show this. Figure 8 shows the Xbar and R Chart for the experiment. Figure 8 Xbar and R Chart with altered part number 1 data. Figure 8 shows us that both of the operators measured a large range of values (R Chart). This tells us that both of the operators had trouble measuring this part accurately. The Xbar chart shows the averages of all the parts by operator along with the grand average and upper and lower control limit line. The R (Range) chart shows the range of measurements made and plots them with the average range and control limits. We can see in Figure 8 that the range for part 1 on operator 2 was up over 20 ohms. Experiment 3: Repeatability Problems For this experiment I have altered all of the original data so that each measurement was offset by either plus or minus five ohms. Figure 9 shows the data set used. Figure 9. Resistance measurement data with added offset Figure 10 shows the ANOVA gage study result with offset data. Figure 10. shows the ANOVA gage study result with offset added From Figure 10 it can be seen that the repeatability is a significant source of variation and may be a problem that needs investigating. This test system may not be making very accurate measurements. Figure 11 shows the resistance by part number. Figure 11 Resistance by part number for data with offset added. Figure 11 shows the large boxes in the box plot indicating a larger spread in the resistance measurements that is consistent for each part, indicating a repeatability problem. Experiment 4: Reproducibility Problem For this experiment the original data was again modified, this time an offset of plus eight ohms was added to all of the operator 2 data. Figure 12 shows the new data set. Figure 12. Resistance measurement data with +8 ohms on added to operator 2 Figure 13 shows the ANOVA gage study results. Figure 13. ANOVA gage study results with +8 ohms added to operator 2 From Figure 13 we can see that the reproducibility is now a significant portion of the variance and repeatability is back down. Figure 14 shows the resistance by operator number. Figure 14. Resistance by operator number with +8 ohm offset added to operator 2 Figure 14 shows the 8 ohm shift nicely and would help to identify a reproducibility problem. Figure 15 shows the operator by part interaction. Figure 15. Operator by part interaction with +8 ohm offset added to operator 2

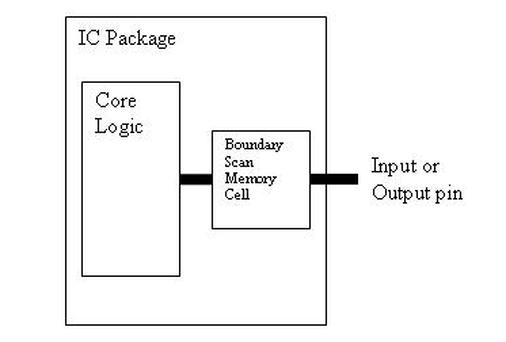

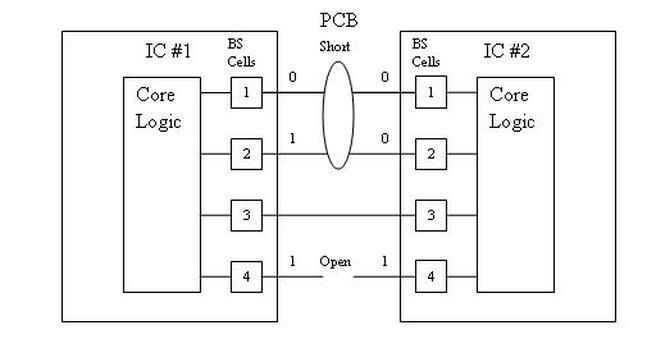

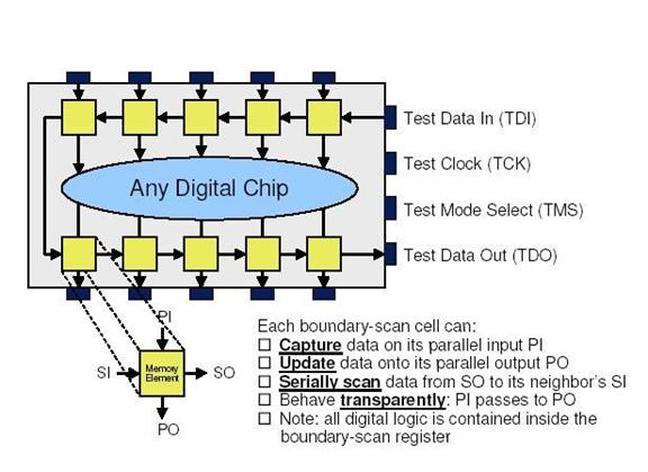

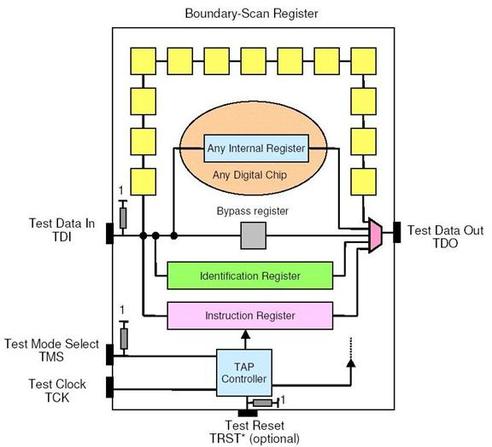

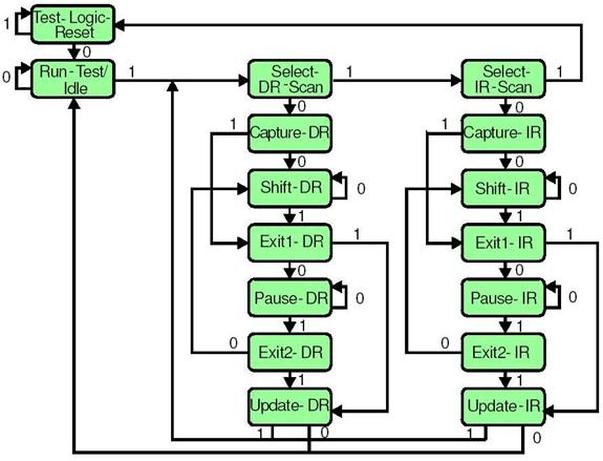

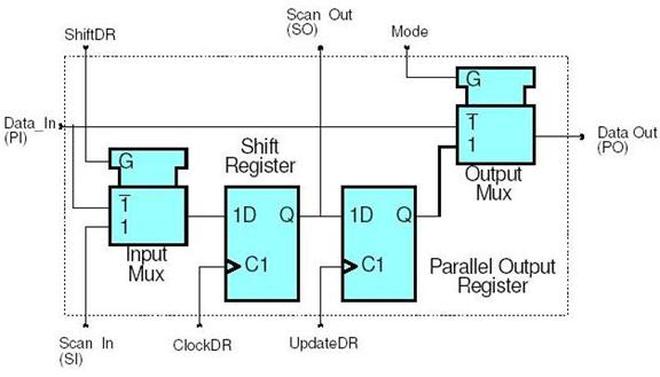

Figure 15 also shows that operator 2 consistently measures the resistances higher than operator 1, again showing reproducibility problems. Other Thoughts Using Minitab is nice for performing these studies because you get lots of plots in just a few clicks, but it is pretty formal and isn’t really necessary to learn about your test system. Just designing the study, taking the data and doing some quick plots in Excel is usually all you need to figure out what needs more investigation. Minitab also gives you a lot of quantitative data, like a p-value for each of the sources of variation. The idea with the p-value is you hypothesize that, for example, repeatability is not a significant source of variation and then the resulting p-value (low p = reject the hypothesis) tells you if that hypothesis is correct. This is probably more useful if you have to present your results in a formal way. Summary Performing a gage study can reveal a lot about your test system you will need to know before you can feel comfortable with the test results it is producing. The sources of variation are part-to-part, measurement system/method (repeatability) and operators (reproducibility). Often times you have to look at the same data presented in many different ways to see the patterns and get the full picture of the problems. While, gage studies reveal problems, that is just the start, it’s still up to you to go figure out what is causing the problem and how to correct it. Boundary Scan testing was created as a replacement for in-circuit testing in the 1980’s. The motivation was a number of trends that were taking shape with board technology that would limit testability. Some of the obstacles for in-circuit test were multi-layer boards. With a multi-layer it may be more difficult or impossible to probe internal layers with pogo-pins of an in-circuit tester. Other probing problems were evident with the advent of surface mount components and multi-side boards. Surface mount was shrinking boards to the point that there was no room to probe. Multi-sided boards complicate fixture design significantly, increasing test costs. A traditional in-circuit board test will look for the following defects. - Missing components - Damaged components - Opens and Shorts - Misaligned components - Wrong components Boundary scan took all of these defects into consideration and is able to test for them. Boundary scan is not an end all, it’s best to think of it as a tool in an effective test strategy. It’s not that good at doing functional testing or testing of ICs on boards themselves. Boundary Scan Technology Basics IEEE 1149.1 is the standard that defines digital boundary scan. There are a number of other follow-on standards that build in this one. The basic concepts of 1149.1 apply to all of the other standards as well. Boundary scan is something that has to be added to a new IC design while it is being designed and the designer can choose to implement the boundary circuitry any way they want but it should (but doesn’t always) follow the standard. Leaving out a lot of details for the moment, what happens is a boundary scan memory cell is put in-between an IC’s logic and its pins to the outside. Figure 1. shows this idea. This memory cell can then be programmed to either a 0 or 1 to artificially control the inputs and outputs to the IC. There is also a method to read the state of all the boundary scan memory cells. Using multiple boundary scan enabled ICs together, with the abaility to use these memory cells makes it possible to test for the in-circuit tests listed previously. Figure 1. Boundary Scan memory cell between IC logic and external pin. Let’s take a more complete view of how this makes useful tests. Figure 2. Shorts and Opens testing with boundary scan. Figure 2 shows how we can use boundary scan for testing for shorts and opens (the boundary scan cells are numbered for reference). The pins of the two ICs are connected together on the PCB. Keep in mind that these pins are routed to some other place on the PCB for a functional reason, the way the pins of IC 1 and 2 are connected is through the boundary scan cells, and these connections are just for testing. The whole boundary scan system is turned off when the board is actually being used and they have no affect. The short shown in Figure 2 is at the surface mount of the two pins on IC 1. Since we can read and write the contents of the boundary scan cells, we can set BS cell 1 on IC 1 to a 0 and BS cell on IC 1 to a 1 and read at IC 2 that both cells 1 and 2 read a 0. Similarly, since there is an open between the two 4 pins, the receiving pin will always show a 0. Boundary Scan Architecture There are four main parts to the boundary scan architecture. - Bypass Register - Instruction Register - Boundary Scan Register - Test Access Port (TAP) and TAP Controller The rest of these figures are from a really good boundary scan tutorial from Asset company (www.asset-intertech.com). There are four required pins that have to be added to an IC to operate the boundary scan system. - Test Data In (TDI) - Test Data Out (TDO) - Test Mode Select (TMS) - Test Clock (TCK) Figure 3 shows an IC with the boundary scan pins added to the package. Figure 3. Boundary scan enabled IC. In Figure 3 you can see the parallel inputs and outputs along with the serial inputs and outputs. I talked about being able to read and write all of the individual boundary scan cells, this is accomplished with the serial input and output connections to each boundary scan memory cell. Data is written in and read out through TDI and TDO respectively in serial strings of zeros and ones. The TCK clock pin is used to clock this data in and out. TMS is a control signal used to coordinate all of these operations. Looking at Figure 4 we start to see how the four main pieces of boundary scan are setup. Figure 4. A boundary scan enabled IC showing boundary scan components In Figure 4 the yellow squares are the memory cells, these make up the boundary scan register. The Bypass register can be selected to put the IC into normal operation mode, bypassing the boundary scan system. The TAP controller is a state machine that interprets commands on the TMS input to control the boundary scan system. The instruction register controls the current operation of the boundary scan system and is programmed through the TDI input and controlled by the TAP controller. Although I didn’t mention it, Figure 4 shows an identification register. This can be used to store a unique identifier for that IC. Instruction Register The instruction register is where commands are loaded to the control the boundary scan system. Here are the steps to use the instruction register. 1. Using the TAP controller via the TMS input, the command is sent to connect the instruction register to TDI and TDO. This makes the register accessible to write commands to it. 2. A command in the form of a serial string is clocked into the instruction register using the TCK. 3. What are commands? The commands are putting the boundary scan system into useful configurations for testing. Here are some of the commands - Bypass – selects the bypass register between TDI and TDO - Sample – select the boundary scan register to TDI and TDO and sets the boundary scan cells to read the values being serially sent to them. - Preload – select the boundary scan register and sets the boundary scan cells to a known state. - Extest – select the boundary scan register and sets the boundary scan cells to a state where interconnect testing can be performed. Basically, isolates the IC logic for interconnect testing. - Intest - the boundary scan register and sets the boundary scan cells to apply their values to the internal IC logic. - Idcode – connects the identification register. - Clamp – This clamps the output pins to a constant state - Highz – puts the output pins in a highz state as in a tri-state output. 4. Upon sending the command to do so from the TAP controller, the command that was shifted into the instruction register is applied and takes effect on the system. Test Access Port and Controller When talking about the test access port that is really just the four inputs: TDI, TDO, TMS, TCK. The TAP Controller is where the TMS and TCK signal are interpreted. The TAP controller is implemented as a state machine that transitions states on the rising edge of TCK according to the value on TMS and updates the output from the TAP controller on the falling edge of TCK. Figure 5 shows the TAP controller state machine. Figure 5. The TAP controller state machine. The arrows show the possible transitions and the numbers listed on the arrows are the TMS values that create that transition. There are states for the IR (instruction) register and the DR (boundary scan data) register. Going back to the procedure given in the instruction register section, sending a 0 – 1 – 1 would select the Instruction register. Then, holding TMS at 0 for the appropriate number of TCK high transitions would shift the command into the Instruction register. Finally, a 1 – 1 would update the Instruction register, update is what applies the command to the system. With the command sent, the states can continue on to the DR portion for shifting in test data strings. Boundary Scan Register The boundary scan cells located on the inputs and outputs pins are linked together in a serial string to form the boundary scan register. Figure 6 shows an example of how a boundary scan cell is designed. Figure 6. Boundary Scan Cell Design

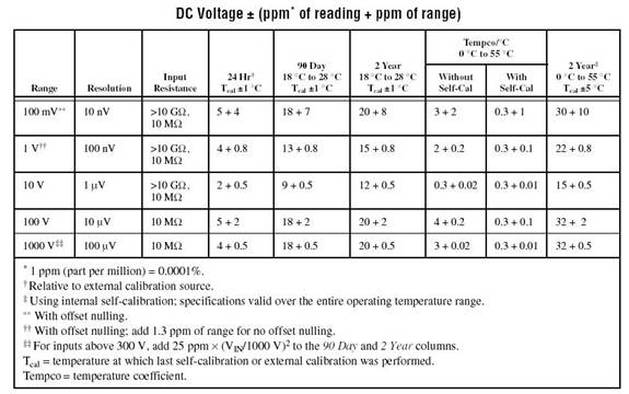

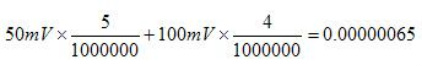

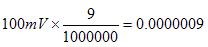

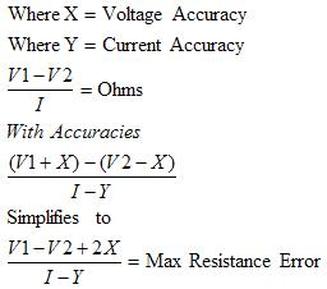

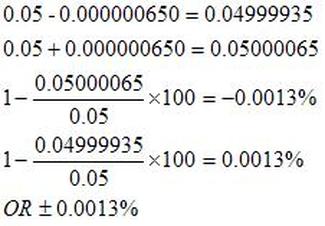

Summary While there are endless topics that could be addressed related to boundary scan, I’ll leave it with the basics. The basic operation of boundary scan is - Scan in an instruction and apply it. - Scan in data and used it for testing. - Read back the data to find interconnect defects. The main pieces are - Instruction Register - Boundary Scan Data Register - Bypass Register - TAP and TAP Controller Most measurement comes down to measuring voltage or current. The simplest way to measure voltage would be with a DMM set to DC Voltage. A lot of ATE type instruments still just measure voltage but add a lot of higher level functions and increased capabilities. I’m going to look at some simple measurement issues and then some more advanced instruments and what they can do. Measurement Basics Voltage and Current Measurements are the basic measurements that are the basis of what the various measurement instruments do. Voltage is measured in parallel across two points and current is measured in series, in the current path. A resistive measurement is made by measuring both the voltage and current and calculating the resistance using ohms law. A useful improvement to measuring resistance is to use a 4-wire or Kelvin measurement. Figure 1 shows how a 4-wire measurement is made. Figure 1. A 4-Wire or Kelvin resistance measurement. In Figure 1 a separate wire is used to connect the ammeter and the voltmeter to the resistance you intend to measure. The advantage here is that the forced current causes voltage drop on its’ wires but not on the voltmeters’ wires. So, the voltmeter sees very little current and the measured resistance is more accurate. Instrument Accuracy It’s important to understand how to calculate accuracy for the instrument you are using. It happens all the time that a measurement seems like it is not working, only to determine that the problem is the accuracy of the select measurement range. Figure 2 shows the DC voltage accuracy specification for the National Instruments 4071 PXI DMM. Figure 2. DC voltage accuracy specification for the National Instruments 4071 PXI DMM. Figure 2 shows that accuracy is calculated as parts per million of reading plus parts per million of range. The figure shows many different columns that are related to the calibration and temperature. For example, within 24 hours of calibration in the 100 mV range the accuracy is 5 + 4. If you are trying to read 50 mV, the accuracy will be calculated as follows. Or 650 nV. To make it a little simpler you could just calculate the worst case accuracy for the range you are in and know you will always have better accuracy than that. To do that, add the measurement and range together and take the full range voltage, like this. Or 900 nV. Accuracy isn’t always in PPM, sometimes it’s given as a percentage, which I think is a little more intuitive, but it’s still the same idea. While the instrument itself has accuracy as just discussed, there are many other factors that can affect measurement accuracy. These include: input loading, leakage resistance and current, shielding and guarding. Here is another example of calculating accuracy, in this case for resistance. Figure 3 shows a resistor where the voltages are known on either side of the resistor. Figure 3. Resistor to be measured Error The error is how much a measurement differs from the true value. Usually, this is a ratio where the maximum error can be calculated based on the worst case observed or calculated measurements. For example, if you take the 50mV with 650nV of measurement error from above, as a percent the worst case is: Resolution

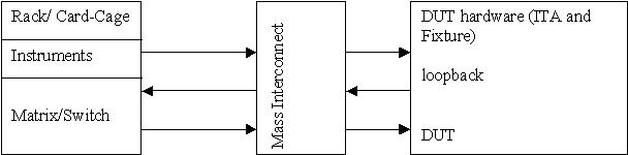

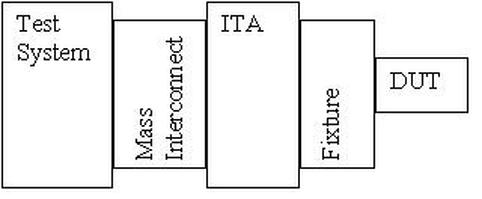

The table in Figure 2 also has column for resolution. Resolution is the smallest portion of input signal that the instrument can display. For a 5½ digit display, there can be 200,000 counts (0 to 199,999). This makes the resolution of the display 1/200,000 = 0.0005%. Sensitivity This is kind of like the resolution, it is the smallest change in the signal that can be detected. The instrument has to detect and display a change in a signal, which is calculated based on the resolution and current measurement range. If the range is 200mV and the counts is 200,000, then 0.2/200,000 = 1 uV sensitivity. There are also calculations for sensitivity based on the number of bits the instrument uses (this is bits on the ADC or DAC), if you had 10 bits the sensitivity is your input range divided into 2^10 parts. Depending on where you look the definition given here for sensitivity may be the definition given for resolution. It’s kind of up to the different instrument manufactures as to how it’s defined. Range The range is pretty obvious, you want to make sure you are not trying to measure a value that is larger than the selected range. However, just selecting the largest range possible all the time will hurt the accuracy of the measurement. I would say you want to measure in a range where the value you are attempting to measure are less than 90% of the range. If you are above 90% consider if you are going to see variation that will be out of range. Precision The term “precision” is used a lot, but this really a more informal term that should not be substituted for accuracy. It is defined more in terms of measurement repeatability and reproducibility. It’s easy to find an explanation of accuracy vs. precision that usually involves a dart board. Instrument Types There are many different types of instruments, I’m going to try and cover the most general purpose ones that I have worked with. DAQ A DAQ is kind of its own class of instrument that covers multiple functions all in one instrument. If you look at the websites for National Instruments or Keithley, they list a DAQ in its own section even though it duplicated the function of many other instruments. A DAQ usually contains a counter/timer, digital I/O lines, analog I/O for single values, waveform output and digitizing. This really covers a lot and you can do a lot with a DAQ. When higher performance is required, that is when you need to go to more specialized instruments, like ARBs or High Speed Digitizers. DMM/LCR A DMM (digital multimeter) is usually required over a DAQ when increase accuracy for measurements is required. The trade off with a DMM is usually speed, in order to achieve higher accuracies the DMM internally averages measurements over a longer time. Many DMMs also have the ability to digitize waveforms, only at slower speeds relative to other digitizing options. In addition to voltage, current and resistance many DMMs include LCR capabilities, measuring capacitance and inductance. A DMM is often specified in terms of digits. The NI 4071 from Figure 2 is a 7½ digit DMM. Where we have 01.234567 if this was the number you were reading from the DMM, the 1 through 7 is the 7 digits and the 0 is the ½. It is only ½ because this digit can only be a 0 or a 1. If you look at the ranges in Figure 2 the ½ digit really only becomes a 1 when the range is maxed out. Programmable Power Supply, SMU A programmable power supply is just what it sounds like. A power supply that can be controlled through software. An SMU (Source Measure Unit) is a programmable supply that also has measurement capability. An SMU can typically be configured to force either voltage and measure current or force current and measure voltage (FVMI, FIMV for short). The SMU works where you set the voltage you want to output and set the maximum current limit. Then, the instrument will vary the current in order to hold the voltage constant and generate some type of alarm is the current limit is reached. Another nice feature of SMUs and programmable supplies is the ability to sweep outputs through a list of settings. ARB, Function Generator An ARB or arbitrary waveform generator is a little different from a function generator in that the output can be programmed to a custom waveform. A function generator will typically only generate preset waveforms like sine or square waves. Again, having a DAQ might make these instruments unnecessary, it just depends on the specifications of each. Some features that may be better than a DAQ are: higher speed, higher accuracy, more triggering, and ARB options, improved noise performance and filtering. Counter Timer A counter timer is an instrument for counting pulses and measuring frequencies. Some applications I’ve seen include counting the number of times a signal crosses a set threshold in a given time. Generating a tightly controlled timing signal. That is, a signal is output at logic high from the counter timer and that signal is used as an acquisition time for a measurement. Oscilloscope, High Speed Digitizer High speed digitizers or the similar but more flexible oscilloscope are need for measuring high speed signals that are too fast for other options like DMMs or DAQs. Where these instruments are really useful is for what’s called visualization which means, seeing what’s going on. When something is not working, it’s often important to have a high speed oscilloscope in order to catch the noise or whatever glitch might be causing the trouble. Summary A few basic concepts are important to know when making electrical measurements, like how to determine the accuracy and knowing the effect of resolution and range on measurements. A few points on several different types of instruments were covered. There is certainly a lot more to know about any of these instruments, but I feel these are the kind of basics that I keep in my head about any of these to have a simple knowledge of what they do. A test engineer has to make sure that the products they are assigned to test are operating correctly now and in the future. Before any products can actually be tested, a test system platform must be designed and built that can implement the engineers vision for an effective test. This entry will focus on building a test system from scratch. This entry will consider the high level issues as well as some of the low level design details. Strategy Building a test system is not the first task when a new product needs to be tested. Before you can start designing and building the system you must know what products are being tested and what measurements need to be made to test those products. In other word, first come up with a test strategy and then build your tester to implement that strategy. A test strategy is things like, what are the failure modes to check for and how much time and money should you spend on test. Before you can buy or build anything you also have to make some decisions about what type of strategy you have for your test system itself. That is, you need to decide on the architecture of your system. Here are some of the factors that go into deciding on tester architecture. What area of application is this test system going to be used? If you have a test strategy in place you will know this but it can affect the type of test system hardware you use to implement your system. 1. R&D testing If you are designing a test system for use while products are being developed, you need to make parametric measurements and have a flexible, informal setup that is fast to use. This may not be much more than an oscilloscope, but a more formal tester with some general software utilities may be called for. 2. Design validation and verification This is a little more formal than the R&D test system in that while it should be flexible for multiple different measurements, it may have to be able to formally record the results of the testing to create a record that the design worked as conceived. 3. Manufacturing test A manufacturing test system has to be fast and, hopefully, inexpensive. It has to be much more robust and automated. This is where most of the consideration of this entry will be. What is the scope of products for this system to test? Again, this will be most likely answered by your test strategy but you will need to know if it will be expected to test only as single product, multiple products or multiple product lines. What is the expected lifespan of this test system? Is it expected to be a general purpose test system that can test products which have not been designed yet? My though on this is it is better to try to make your systems simple and cheap, only testing a single or narrow line of products. I have personally seen several attempts to build universal, future proof systems/ITAs/fixtures only to have these projects grow out-of-control in time, budget and problems. Trying to build the ultimate end-all or universal system seems to be a trap that engineers often fall into. This may be a case of engineers trying to invent interesting but unnecessary projects for themselves. Some other factors to consider include expertise and hardware available, along with the development time and budget. If you have a lot of test developers with a lot of Labview experience, you may want to take advantage of that. Is there any existing hardware that your company already owns? Perhaps you have a number of older PXI systems with some hardware your people know well that is no longer going to be needed. Assuming this will not be an obsolescence problem that may be a time and cost savings to your project. Obviously, you have to build a test system that is appropriate to the budget you have available. Often, development time can trade off with cost. For example, you may be able to develop tests from more general-purpose instruments versus buying instruments (at a higher cost) designed for your exact test. Like an instrument that implements some special serial data protocol. The general architecture of a test system is usually based around a main bus. There are a number of bus options to choose from. A rule of thumb is that you should try to select a bus that has instruments available to perform 80% (if not all) of the measurements you need to make. Also, how well do the busses work together, like sending triggers and clocks across the bus types. An embedded controller for a PXI chassis will have GPIB and USB ports, but you should be sure the instruments can be integrated well. All of the test bus options trade off strengths and weaknesses including the following. 1. Bandwidth The bandwidth is a measure of the rate data can be sent across a bus in MB/s. So, as the bandwidth goes up the bus is able to transmit more data in a given period of time. This is an important consideration if you are trying to gather some kind or real-time high-speed data and will have to log it as fast as it’s acquired. 2. Latency Latency is the delay in the data transmission. This is different from the bandwidth where the bandwidth is more of the raw ability to transmit data; the latency is a more practical measure of how long it will take to transmit. Ethernet for example has pretty good bandwidth but poor latency. 3. Message based versus register based communication This is an instrument distinction you will often see that traces back to the bus the instrument is based on. Message based may be a little slower due to having to interpret the commands. Register based will directly write to the instruments registers, for fast binary data transfer. It used to be that register based instruments were much more difficult to program than message based instruments, but that is not really visible to the programmer anymore as the programming is all done with high-level drivers that mask the complexity. However, the drivers may slow down the performance gain of the register based. 4. Range of data transmission All the different bus types specify a maximum cable length for the instruments. LAN would be the best choice for long distances. 5. Instrument setup and software configuration It may be relevant that some instruments are plug and play while others require a system reboot. 6. Ruggedness of the system and connector How rugged is the connector for a particular bus? For example, USB is not a very rugged connector and can be easily un-plugged. There are several busses to choose from and I’ve probably missed several. Here are some popular ones 1. PXI/VXI/PCI (Card Cage) PCI card based instruments can be placed directly into a regular PC to put together a very simple test system. A PXI system and its newer variations are based on the PCI bus but go into a chassis to make a more rugged and dedicated test setup. The PXI chassis can then run off of an embedded or external computer and can work along side other standalone instruments. VXI is kind of old news at this point but they are pretty much the same kind of system as PXI. I have worked with both but I don’t think VXI was ever that popular. PXI, however, is an extremely popular choice and has heavy backing from National Instruments, so it’s fairly easy to get these systems going with Labview. 2. GPIB (Rack and Stack with standalone instruments) GPIB is usually used to connect stand-alone instruments. These types of instruments are different from card cage types in that they have a user interface on the front of the instrument for manual control or they can be programmatically controlled via the GPIB and PC. Because of the interface options, instruments like this may be a better choice if you want the option to do more manual, bench-top testing. They are also often mounted in test racks along with a computer to create an automated system. 3. Monolithic This is another option for test systems where you are not actually building the test system you just buy it and configure it to do what you need. Integrated circuit test systems often come like this. There are a lot of specific purpose testers, like manufacturing defect analyzers, flying probes testers, shorts and opens testers and in-circuit testers. Of course, you can perform all of these types of tests on systems you build yourself too. I have worked with test systems form Eagle Test Systems (now part of Teradyne) that are specifically for testing analog, mixed signal components and boards. While you still select the instruments you want they all come from the same manufacturer. They also develop their own programming environment. The advantage is you start with a nicely integrated test system, which is easier for some tasks (like automation with a handler) the disadvantage is it is more expensive. 4. LAN For remote monitoring applications Ethernet based instruments are available. A newer standard based on Ethernet is LXI. LXI adds triggering and synchronization to these instruments. 5. USB There is also instrumentation that can connect to a PC via USB that connect via plug and play. However, they are short range and do not a rugged connector. Test System Structure All test systems have a general structure with the following components 1. Instruments 2. System Control and Software 3. Switching 4. Rack 5. Power 6. Fixturing 7. Mass Interconnect/ITA Each area here merits some discussion and consideration. The remainder of this entry will cover the each of these areas. Instruments The instruments are the heart of a test system, and selecting your instruments is the most critical part of building the system. Selection of instruments is based off of knowing what measurements you are going to make. One approach may be as follows. Steps for selecting instruments. 1. List the input and output parameters you need to measure on your DUT. This could involve listing all the pins or test pads or whatever the access method is and then determining what needs to be done with them to get the measurements you need. So, do you need to measure voltage at a pin and report that? Do you measure the input and the output at two pins and use the values to calculate the gain? 2. List the accuracy and resolution needed for each measurement. Actually, you only need to look at the worst-case accuracies. For example, if you know that one of the measurements is really low current, you don’t really need to worry about all the other higher current measurements. As for resolution, this might show up if you need to accurately digitize some high-speed signal. In an application where you want to digitize some high-speed pulses for a certain length of time, a digitizer typically will trade off memory depth (number of samples) with acquisition speed. If you want to digitize really fast for a long time, that may take a more capable instrument. As a rule of thumb, the instrument accuracy should be at least 4x the desired accuracy of the measurement. 3. Check for redundant testing and instruments. Now you should be getting a better picture the instruments you will need in order to make all the measurements you need, but you still have not purchased anything yet. This is a good time to step back and see if you are duplicating any testing or duplicating any measurement capability. So, don’t just buy a DMM to do a measurement because that is what you would normally use to measure voltage. Maybe a DAQ that you are going to need for some other reason will have sufficient accuracy to measure that voltage, or maybe a power supply has the capability to measure voltage itself. 4. Will there be any need to do things in parallel? How many simultaneous DC signals will you need? This can affect the number of DAC channels you may need. How many waveforms will you need to apply simultaneously? If this is greater that maybe four, go with a card cage, otherwise a standalone rack instrument might be all you need. 5. Will you need any specialized instruments to implement specific test standards like a boundary scan controller? 6. Other instrument specs to consider – Noise, power, offset compensation, dynamic range, isolation. Some common instruments: 1. DMM – highest accuracy voltage and current measurements, slower speed 2. Arb, Digitizer, DAQ – Analog and digital I/O, able to generate patterns 3. Counter – sometimes part of a DAQ, counting and timing pulses, measure frequency. 4. SMU – Forcing and measuring analog voltages and currents. Goes beyond a DAQ for specialized applications like really low currents. System Control and Software In reality the first decision you may make in your head is what programming language you want this system to be. National Instruments Labview is the most popular choice at the moment. Some test engineers don’t like Labview but programming languages is one of those things that people really like to argue about. I feel Labview is the easiest to get a lot done fast, but it can also be easy to get yourself in trouble if you don’t know a few basic things about Labview. I plan to write another entry all about Labview in the near future. There are other choices like NI’s LabWindows/CVI and Microsoft Visual Studio .NET languages. NI clearly cares about and wants you to use Labview and these others are less common. If you are building a test system that will be used in a manufacturing environment you will need to have a user interface and a test executive. Well, you will need these things to have a serious test operation, I’ve seen places just getting started that didn’t have much of either. The test executive is what controls the test flow, handles sending your test results to a database and generates test reports. NI has a product that does all this for Labview called Teststand. NI also has templates for manufacturing test user interfaces that work with Teststand. Engineers love to come up with reasons why they should write their own test executive and user interface, but I would start with what NI provides and only go off on your own when you reach a serious road-block. Switching Switching can somewhat be seen as just another instrument but it really calls for a strategy all of its own. There are the following choices when it comes to switching. 1. No switching 2. Switching in the test system only 3. In the DUT interface hardware (ITA/Fixture) only 4. In both the test system and the interface hardware 1. No switching This is pretty rare, you almost always want the flexibility of some sort of switching but the switches add a little bit of resistance in the measurement path. For very sensitive measurements it may be required to connect the instruments directly to the DUT. This is also faster because you don’t need the software to operate the switches and wait for them to settle. The downsides are that it’s not flexible and not expandable. It can also be expensive because each instrument I/O point is completely dedicated to a signal DUT test point. 2. Switching in the test system only It’s easy to add switching to a test system by putting the switching in the test system itself. There are lots of PXI matrix cards and other types of switching modules available. This makes it easier to develop your tests because all the switching is controlled with software drivers that the manufacturer provides. If you leave open space in your card cage this is also an easy way to add more switching in the future. A downside can be that it can create some long signal paths. If you take the approach that all of the instrument outputs go out to a mass interconnect, to the DUT hardware, then back through the interconnect, through the matrix and back to the mass interconnect to finally arrive at the DUT, that can be a lot of wiring. This path is shown with the arrows in Figure 1. Some of the problem can be avoided by using parallel sense lines wired all the way to the DUT, but wiring length should be considered. Another problem can be that you are leaving long wires attached to your DUT that can act as an antenna for noise when that line is switched out. Figure 1. A long signal path through a mass interconnect and a matrix card. 3. In the DUT interface hardware (ITA/Fixture) only If all the switching goes in the DUT hardware, this should prevent a lot of the signal length problems described above because with the switches close to the DUT you don’t have to loop back to the system. This also solves the problem of have long wires on you DUT when switched out. The downside here is you need to design some sort of hardware to control your switches with software. There are relay driver components that can go on a PCB to provide the logical control of the relays, but you still have to figure out how to implement those. Another problem here is if all of your switching is going into a test fixture, in order to have any sort of reliability in your system, you’ll need to design a PCB. You have to have the tools and capability to design a PCB, designing a PCB is expensive and it’s not easy to change if there are mistakes or easy to expand in the future. 4. In both the test system and the interface hardware This is the most flexible option but also the most expensive. It’s flexible because, as was discussed, sensitive measurements are switched in the fixture and when accuracy is less of a concern the tester matrix can be used. There are a few different switching topologies available. 1. Simple Relays or FETs 2. Multiplexors 3. Matricies As with all things, try to keep the switching as simple as possible. Power and load relays are often separate from the instrument signal routing relays. Rack Designing and assembling the test rack is mostly just a list of things to remember to help ensure success. Even if you are just putting together a card-cage with a fixture, monitor, keyboard and mouse, these are things to consider. There isn’t much to the physical rack itself, you can buy them from many vendors and the instruments are a standard size to fit them. There are lots of rack mount PCs available also. Consider the following. 1. Assembly - Use the cables recommended by the instrument manufacturers - Secure all cables with strain relief - Make sure all cables are long enough - Use cable sleeves to keep things organized - Label the cables with what they are used for 2. Size - Leave some room to expand within the rack - How much floor space with the tester take and how much do you have - Leave space so everything fits, including the instruments and other hardware but also cables, power sources and PC. 3. Portability - Put wheels on the system - Make sure it fits through the door and it can be transported. Also, make sure it will fit in the space where it will be used. I have seen testers that were too tall to fit in the room where they were supposed to be used. 4. Safety - Interlocks – all the parts of the system should have interlocks where the tester will not operate unless a switch is closed that (ideally) is connected to a safety shield - Shields - EMO – Emergency shut-off switch somewhere easy to find. - Heavy stuff low – put heavy power supplies and UPS low in the rack so it will not tip over easily - Balance fixture shelf – if there will be a heavy fixture shelf where the DUT is connected, be sure this is not making the rack unstable. - High voltage points enclosed and disabled with interlocks 5. Environment of use - Ruggedized for its environment - What temperature and humidity is the room where the test system will be? - Altitude - Shock and vibration - Pollution level – will the tester be working in a dirty environment? 6. Ergonomics - Add some lights if necessary, particularly in the back side of the rack for looking inside - Work Space – What type of work surface will this tester need in a manufacturing environment? - Position for operators – will the operator stand or sit? Is the tester comfortable to use. How tall are the people using the tester? - Input devices – where do you put monitors, keyboards and mice? 7. Cooling - Add fans for air flow, maybe do some initial testing to see how hot the tester gets inside while running to see how many and how effective the fans are. - Instruments have their own individual fans, think about where these are blowing and if it is consistent with the overall cooling - Instruments often have specifications for ventilation that should be followed. 8. Signal Integrity and Grounding - Prevent long signal paths by designing DUT, switching and instruments as close together as possible. Add remote sensing lines for four-wire Kelvin measurements. - Terminate all grounds the same place, at the power distribution unit. 9. Maintenance - Setup a preventative maintenance cycle for cleaning fan filters and performing instrument calibrations - Replace worn parts - Provide some documentation or training for how to maintain the system Power There are two types of power to consider in a test system, system power and DUT power supplies. The systems power unit will provide AC power to the computer and all the instruments with some centralized controls. There might also be DC power supplies in the system specifically for powering fixtures and DUT hardware, it depends how you want to design it. The DUT power supplies are used to power your DUT itself (like 5V, +-15V rails, etc.), these are really very similar to the other instruments. Here are some power considerations 1. System Power - Does the system need power for different regions? 120VAC and 240VAC - Have an EMO button for emergency power off - Have a UPS in the system so if the power goes out it can be shut down properly - Consider the load that your system is. The total draw with all the instruments should be about 70% of the total available. - Determine a sequential power up method. If all the instruments are connected to one switch that inrush current could overload fuses or breakers. 2. DUT Power – power supplies have lots of specifications that may or may not be relevant to what you are doing. Here are some of them, I won’t try to explain it all here. - Settling time - Output noise - Fast programming of levels - Remote sensing - Built in voltage and current measurement – this is another alternative to adding expensive instruments - Physical size of the unit – power supplies can get big and heavy - Triggering options - Programmable output impedance - Output ranges, multiple outputs - Over load protections (V and I compliance) - Lead lengths - Safety Fixture I have already mentioned fixtures several times and I plan to write about designing fixtures as a separate article, but I’ll try to write some basic consideration here. The idea of a fixture is that you have to design some piece of hardware that will interface with the exact pin-out or test-points or whatever the method of your DUT. At one end are all the signal lines from the test system and the other end is the DUT interface. It makes the test system more reusable and also provides a place to put any necessary signal conditioning circuitry or switching. A fixture might also contain hardware to facilitate testing the DUT, like loads. Loads could be fixed value and location or programmable via switching. Some thought must also go into designing fixtures for operators to use in manufacturing. Are they rugged and ergonomic so that operators can easily place the DUT in them over and over without error or anything breaking? Are they easy to duplicate and deploy? ITA and Mass Interconnect The mass interconnect is a connector block where all of the instruments are wired out and made accessible. The mass interconnect consists of a receiver hardware piece attached to the test system that is populated with receiver modules that wire to the instruments. The ITA (interchangeable test adaptor) is the other side of the connector that interfaces the test system to the fixture. The ITA and fixture can sort of be a blurry line, which is which. The ITA may be just an interconnect, or it may contain switching and signal conditioning if it is desired to keep the fixtures very simple. The big advantage of the mass interconnect is it makes your test system reusable and general so different products can be quickly swapped and tested on the same tester. Figure 2 shows the block diagram of test system to DUT. Figure 2. Test System to DUT connections

Summary Building a test system can be a pretty big job with a lot of factors to consider. Having a good test strategy and knowing what needs to be accomplished is key to building a successful test system. The main points are - Strategy – what kind of testing is being performed? R&D, Deisgn V&V or Manufacturing. What technology will the tester use? PXI-Card Cage or Rack based with standalone instruments. - Instruments – What instruments will you need to make the measurements you need to make? - System Control and Software – What programming language and support software will be used and is needed? What will be developed or purchased? - Switching – Where is the best place to put switching to meet the needs of your testing? - Rack – There are a number of items to remember to make a useful and useable rack. - Power – Two kinds of power, system and DUT. - Fixture – The fixture interfaces the test system with the DUT. - ITA and Mass Interconnect – The mass interconnect makes the test system generic so that multiple fixtures and DUTs can be tested. The ITA is used with the mass interconnect and works with the fixture. Test engineering is a sub-discipline of electrical engineering that isn’t really well defined but people who are test engineers tend to know it when they see it. I have been working as a test engineer for the last seven years.

I work for a large medical device maker where I focus on manufacturing electrical test at the circuit board level. When I think of test engineering it comes down to instrumentation, measurements and automated test systems. I’d like to expand my view of what is, sort-of is and isn’t test engineering in a way that other test engineers can agree with. What test engineering is not Software testing Software testing is not what test engineers do because test engineers tend to have a background in electrical engineering or as an electrical technician. Software testing is something that computer scientists do (or probably don’t want to do). Software development / Software engineering Test engineers do a lot of programming but they are not software developers. The code that test engineers write is really more like scripts to control instruments and take measurements. Design verification testing I don’t really think of design verification as what test engineers mainly do. Test engineers focus on testing products during the manufacturing process. Testing that the design does what it’s supposed to do is what the design engineer does. What test engineering (sort-of) is Quality assurance At a large company there will be quality engineers or a whole QA department. They do things like analyze products for failure modes and track product failure trends. They might be the people who ask the test engineers to implement new test methods or test coverage to detect a newly discovered failure mode. They will commonly do more data and statistical analysis than test engineers. They also will focus on predicted reliability of a product and analysis of returned field product. Manufacturing engineering This job is more often overlapped with the job of the test engineer but again at a large company they are usually separate positions. The manufacturing engineer focuses on taking the tests software and systems developed by the test engineers and deploying them to the manufacturing floor. They will focus on the yield of the product being tested and making sure that the test systems are working and under control. Under control is a statistical method of looking at the measurements a test system is making to be sure it’s still measuring correctly. If one device under test fails it’s probably a bad part, if nine out of the last ten fail, something has gone wrong with the test system. What test engineering is TE is all about measurements and automated test systems to make measurements on products on the manufacturing floor. Depending on the test strategy being implemented, electronics may be tested in all or some stages while being built. Testing may take place on the individual components, integrated circuits, PCBs and finished products. Component testing Components may be tested by a vendor, sampled and tested or 100 percent tested before they are used in a product. Large companies often have people other than test engineers working with component suppliers to determine how their components are tested and manage that relationship. Integrated circuit testing Integrated circuit testing is a specialty of test engineering that is the most complex and costly of the testing. This is often performed by the chipmakers themselves or outsourced due to the high cost of buying “big iron” testers. A company like Teradyne focuses on building extremely expensive and large test systems for integrated circuits. PCB testing Testing a PCB can be a very flexible place to perform testing. With a PCB there is still access to the circuits through test pads (this isn’t always true with some products being so small, there is no real-estate to spare for test access or boards are multisided) and also access through techniques like BIST and Boundary Scan. Also, it’s still cheaper to find a problem with a PCB than find it with a finished product that won’t turn on. Since it is so flexible, how a PCB is tested is variable. In my industry there is often a bed-of-nails type DUT interface along side a test system. But, the list of variables include, cost, volume, PCB complexity, application and level of regulation. This all gets to test strategy, which I plan to write about in the future. Finish Product (functional) testing Functional testing is a somewhat broad term. It could be interpreted to mean that when you power up and operate an electronic product it simply works or it can mean testing some higher-level functionality at a lower level. For example, while still at the PCB level a product could have sub-sections of the system functionally tested for some higher level measurement like the bandwidth of an amplifier. Another way to think of it is once you lose any access to the system, like probing test pads, and the product is complete then functional testing is taking place. Inspection It’s worth mentioning that inspection is slightly different than testing. Testing is where a product is built and then it is measured to determine if it is has defects or functions correctly. When a product is tested and it doesn’t work, there is no going back, you either scrap it or repair it. With inspection a product is observed to check for defects so it can be caught earlier. For example, you could test a completed circuit board or you could inspect an unpopulated PCB to look for a broken trace so all the time and expense of populating that PCB with components can be saved. Reliability testing I mentioned that reliability activities are not always the job of the test engineer. That might seem a bit strange, but really there is a difference between testing a product to see if it is working right now when it is shipped, versus attempting to perform tests to try to predict if a product will continue to work for its expected lifespan. These types of activities would be considered reliability testing. One such type of test would be a burn-in test, where the product is tested in a high temperature environment in an attempt to remove weak products. Incidentally, just testing something at high temperature doesn’t reveal many latent problems. What is much more effective is to test in a temperature cycle, forcing the product to experience the expansion and contraction stress. Humidity is another important environmental variable in determining reliability. Summary There are a lot of issues to consider and steps in the process of testing electronic products. I have gone over it really fast in an effort to establish the basics without going into a lot of detail. I’ll dive into many of these topics in more detail in the future and hopefully I won’t miss too many important considerations in test engineering. As a test engineer before you can start building a test system or writing test code you have to decide on a test strategy. You have some sort of electronic product that needs to be tested before it can be sent to the field (sold). The question is, what problems could the product have, where and how are they going to be detected? Most companies already have some idea of what their strategy to test and overall product quality is, but these decisions have to be made or were made at some point.

Three Ways to Fail There are three ways an electronic device can fail 1. Poor quality raw materials 2. Design is incorrect 3. Process variation That’s it, three ways. So, what does each of these entail? 1. Poor quality raw materials An example of this would be defective components or bad PCBs. 2. Design is incorrect This is just what it sounds like, the product as designed does not meet its specifications. These errors should not have to be checked for on every instance of a product that is built in manufacturing. These problems should be caught early in the design validation and verification. However, design problems can show up in the manufacturing test, particularly when high volume manufacturing measurement data is analyzed. Some design problems - Does not meet analog specifications - Digital logic error - Functional failures 3. Process variation This type of failure is basically manufacturing errors. Here is a list of a few manufacturing errors - Shorts - Opens - Missing component - Wrong component - Backwards component - Bent Leads - Functional Failures Failure Mode Effects Analysis There are certainly a lot of failure modes that can happen to electronics in general but how do you know what failure modes to test for in a particular product? One way is to perform an FMEA. An FMEA is a formal method for determining what could go wrong so you can design a test strategy to check for all these failure modes. It is easy to find an FMEA worksheet on the internet to see how it works. The idea is to think of all the possible failures for each function or sub-system (or however you break it down) and then rate those failures by how serious their consequences are, how frequently they occur, and how easily they are detected. Here are some typical fields in the FMEA table - Function - Failure Mode - Effects - Severity Rating – this could be 0 to 10 or 0 to 3 or whatever works. - Causes - Occurrence Rating - Current Controls – what should be preventing this failure from happening in the first place - Detection Rating - Risk Priority Number (RPN) – This is a method to make an overall ranking of the items, this is the three rankings multiplied together. RPN = S * O * D. Test Strategy Complexity In reality, a test strategy comes down to the type of product you are testing and how much money you have to spend to test it. If you are testing a toy fire truck with an electronic siren I’m sure all the testing that takes place is pressing the button to see if the siren goes off on the completed toy, if that. Of course in a mission critical situation the test strategy could be very complex and expensive and would require a lot of work to design. Here are some additional factors to consider when developing the strategy. Cost – How much budget do you really have to work with? How much testing can you do with that money and where are the most important places to apply it? Volume – What volume of this product is being built? Product Complexity – How complex is this product being tested? Will it require a lot or little testing? Will the testing have to be broken up into multiple levels and tactics? Level of reliability needed – How reliable does the product you are testing really need to be? Ideally, it would be 100% for anything, but it’s really more about what testing is cost effective to perform. Environment where the product is used – will you have to duplicate any extreme conditions to verify that the product will work under those conditions? How to handle ASICs and components? – Will you assume that all ASIC and components are good before they are used on a board? Do you need to sample or fully test these components in-house? Outsource – will any of the testing be outsourced to another company? Is there a way to outsource part of the testing or building equipment that makes sense? Environment Stress Screening (ESS) or Reliability testing – Will you have to attempt to predict the reliability of the product or just verify that it is working correctly as it is built? Test Levels – Is there a way to divide up all the necessary testing into different levels that will make the testing simpler or cheaper? Some test levels include: - Incoming inspection, vendor test - Prescreen (auto shorts, opens, manual inspection) - In-circuit test of manufacturing defects analyzer - Board level burn in or ESS - Functional or performance - Hot mockup - System burn-in - Field test Test Tactics – A test strategy is the why and the test tactic is how. What methods will be used to best implement your test strategy? Some test tactics include, - In circuit test - Functional - Emulation - Inspection - Hot mock up - Design for test - Boundary scan - ESS Two Test Strategies There are two general strategies that can be taken when building a product. 1. Build it correctly the first time 2. Build it, test it and repair the failures 1. Build it correctly the first time This may seem like what you would obviously try to do no matter what, but it goes beyond just trying to do a good job. This is really the idea of putting inspection and process monitoring into your manufacturing process. As an example, components may be screed before they go onto a circuit board, then the circuit board undergoes some type of inspection, maybe an IR inspection and process monitors are put on this inspection. Finally, the final product is built with some final functional testing. It may be more elaborate than that but the idea is to end up with nearly 100% yield at the final testing. There is no need for scrap, debug and repair. This is really what Lean manufacturing and Six Sigma type concepts are all about. Judging from the volume of information about these topic on the internet, this strategy is very popular right now. 2. Build it, test is and repair the failures Here the products would be built without worrying too much about inspection or process monitoring then the products would undergo electrical testing. Anything that fails the testing is then debugged and repaired. The advantage here is that you saved a lot of time, cost and effort building the products. So, if the yield is okay and this method is appropriate for the product being tested, it should be fine. The disadvantages are that you have either lots of scrap or lots of products to debug and repair. The testing that was preformed should be an aid, but it still may be pretty difficult to debug and repair the problems. Summary Few engineers really get the chance to develop a test strategy from scratch because it can be tough to change the momentum of a company at such a fundamental level. However, if an engineer knows all the issues that go into a test strategy they can pick spots that and argue a case for changing them. Test strategy can be very simple or very complex, it’s all based on the product you are testing and the goals of your company. |

Archives

December 2022

Categories

All

|

RSS Feed

RSS Feed